SentinelOne

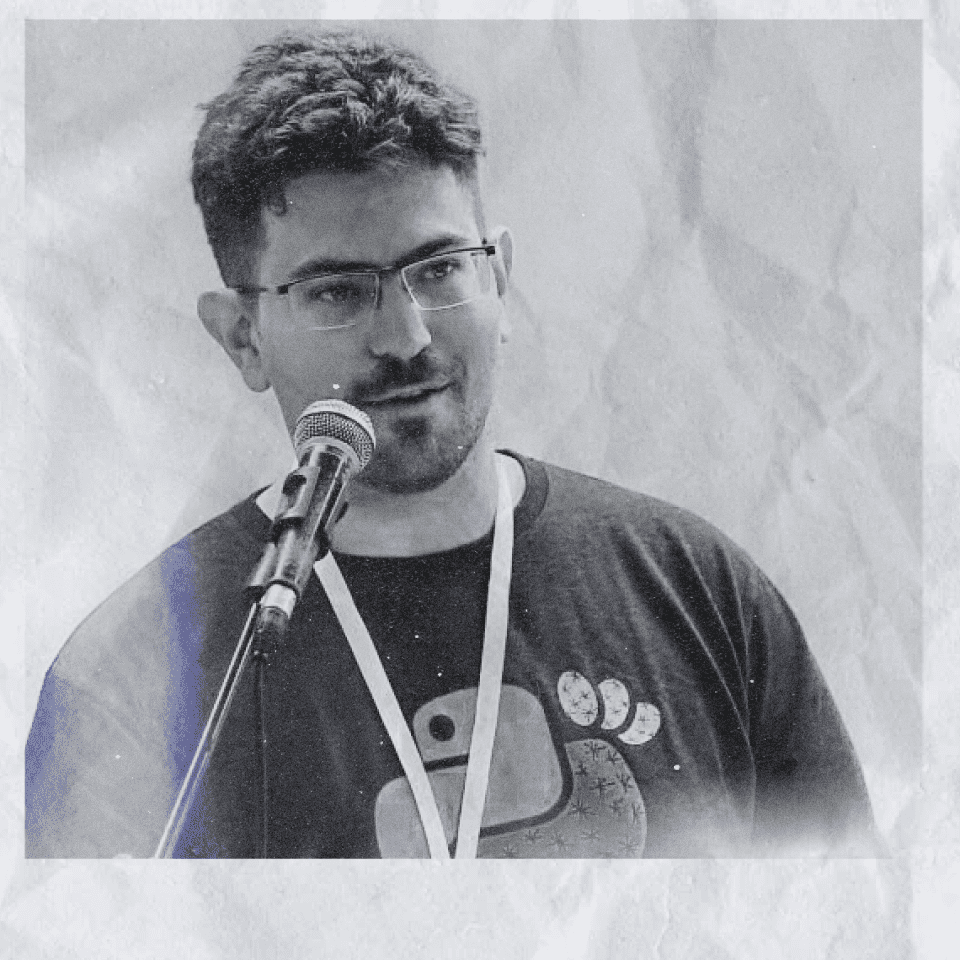

Dean Langsam

Quiver – Using Cutting Edge ML to detect interesting command lines for Hunters

What do GPT3, DALL-E2, and Copilot have in common? By grasping the structure and nature of language, they can generate text, images, and code that provide added value to a user. They now understand command lines!

Quiver – QUick Verifier for Threat HuntER is an application aimed at understanding command lines and performing tasks like Attribution, Classification, Anomaly Detection, and many others.

DALL-E2 is known to take an input prompt in human language and draw a stunning an image that matches exactly; GPT3 and its likings can create an infinite amount of texts that seem like a real person has written, even lately fooling a Google engineer it has become sentient; While Github’s Copilot can generate whole functions from a comment string.

Command lines are a language in themselves and can be learned the same way other languages can. And the application can be as versatile as we want. Imagine giving a command line to an input prompt and getting the probability of it being a reverse shell, by an Iranian actor, or maybe used for cybercrime. A single prompt on its own may not help so much, but with the power of language models algorithms, the threat hunter can have millions of answers in a matter of minutes, shedding a light on the most important or urgent activities within the network.

In this session, we’ll demonstrate how we developed such a model, along with real-world examples of how the model is used in applications like anomaly detection, attribution, and classification.

Dean Langsam is a data scientist at SentinelOne, working on the intersection of data science, machine learning, deep learning, language models, Python scientific programming, data visualizations, and Bayesian modeling.

His work focuses on combining all of those to new applications in the world of cybersecurity.